In the field of testing, it is customary to talk about the quality of the software itself, about its verification and evaluation. But it is also important for customers to know and control that the product testing itself also goes well, all the most important aspects are checked, a sufficient number of tests are performed, a sufficient number of errors are corrected, etc. That is, the quality of software testing, like many other works, must also be evaluated according to different criteria. Such criteria are called QA metrics. They represent various coefficients and indicators, with the help of which you can make a picture of what is happening on the project, evaluate it and make a decision to improve the processes if necessary. Metrics relate to various areas in testing, for example, requirements, quality of the product itself, efficiency and quality of work of the development team and the testing team, etc.

Test Coverage

- 21.02.2023

- Posted by: Admin

This article will discuss one of the metrics for evaluating test quality, such as test coverage. This metric represents the density (coverage) of test coverage of the executable program code or its requirements. The more tests are written, the higher the level of test coverage will be. But, you need to understand that there cannot be a full 100% test coverage, as it is impossible to fully test the entire product.

In turn, there are several approaches to the assessment of test coverage:

- Requirements Coverage;

- Code Coverage;

- Test coverage based on control flow analysis.

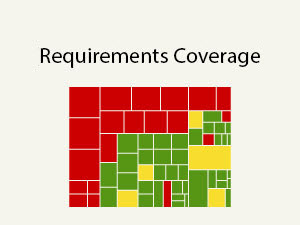

This metric shows the density of test coverage of product requirements. It will best reflect the situation when the requirements are quite atomic.

This metric shows the density of test coverage of product requirements. It will best reflect the situation when the requirements are quite atomic.

The metric is calculated by the formula:

Test coverage = (number of requirements covered by test cases/total number of requirements) x100%

To verify such test coverage, the requirements must be broken down into points and each point must be linked to the test cases that test it. There is no need for test cases if they do not test any requirements in the product. In turn, requirements points for which no test case has been written will not be tested, which means that it will not be possible to say with accuracy whether these requirements are implemented in the product and how these functions work.

All connections between test cases and requirements points are called a trace matrix. If you trace and analyze these relationships, you can understand which test cases test which requirements and for which requirements you need to write/increase the number of test cases. For some requirements, there may be redundant test cases.

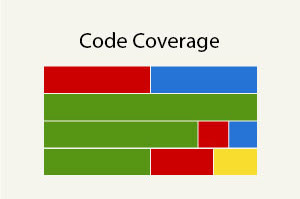

This metric shows how many lines of code are involved (executable code) when passing the test cases written for the product.

This metric shows how many lines of code are involved (executable code) when passing the test cases written for the product.

The metric is calculated by the formula:

Test coverage = (number of lines of code covered by test cases / total number of lines of code) x100%

To determine which lines of code were executed when passing test cases, there are special tools, such as Clover. They help determine which lines were used when for certain test scenarios. This information will help you understand which tests duplicate each other and for which lines of code you need to add checks. Code test coverage is checked during white box testing at the module, integration, and system levels.

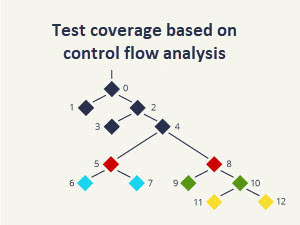

It is also a method of testing with access to code, but in which the coverage of the execution paths of the application code is checked. Test scenarios are also created to cover these paths.

To test control flows, you need to build a Control Flow Graph.

It consists of the following main blocks:

- process block (for entry and exit there is one point each);

- alternative point (there is one point for entry, and two or more for exit);

- connection point (there is one point for exit, and two or more for entry).

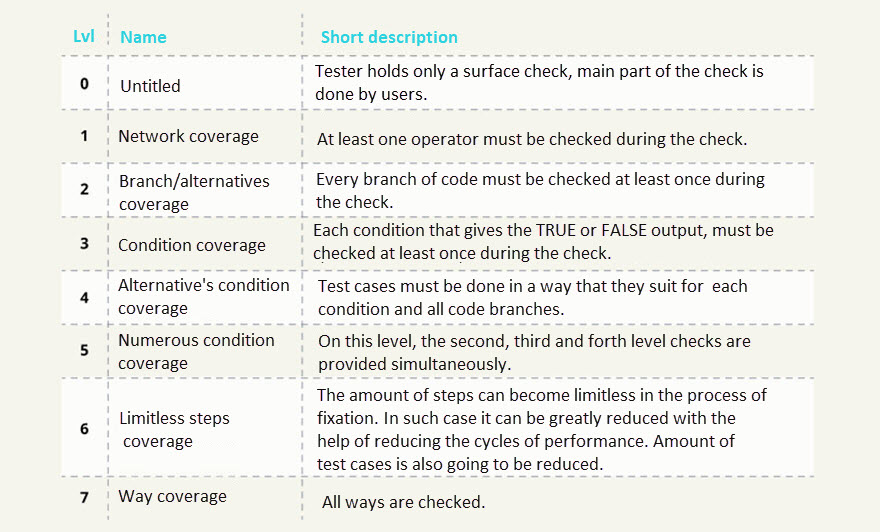

Control flows are tested at several levels of test coverage:

This is only part of the metrics by which the quality of the conducted testing can be assessed. In addition to those described in this article, there are many others, such as the coefficient of stability of requirements, the coefficient of reopened defects, the effectiveness of tests and test sets, and others. All of them are aimed both at self-improvement of the quality of development, software testing and increasing user satisfaction with the product, and at improving the work process of the entire team as a whole and increasing its efficiency.